How to use Google’s Antigravity IDE without hitting rate limits

One of the hottest releases recently has been Google’s Antigravity integrated development environment (IDE). Unfortunately, it has a lot of limitations in the form of throughput limits, and reaching them is very easy.

I pay for Google AI Pro and still hit the rate limit by only running two or three prompts. Luckily, through trial and error, I found a way to use antigravity without hitting those limits as often.

Optimize your costs versus your speed

If you want to get the most out of Google Antigravity, you need to choose wisely which agent you use. You need to keep in mind that usage quotas are incredibly tight and you can exceed them during a prompt, meaning you’ve wasted them. The simplest and most immediate thing you can do to prevent your tasks from stopping abruptly is to move most of your daily coding work to the Gemini 3 (Low) model. Don’t believe Google’s “generous” wording, because it only updates once every five hours.

If you rely too much on the computationally intense Gemini 3 (High) thinking level for deep, parallel exploration of reasoning, you’ll likely run out of resources in the middle of a task. This happened to me twice, and it was because I gave him too much work in the end. This resource consumption occurs because the model generates hidden “thinking tokens” during its internal deliberations. These tokens factor directly into your overall cost and quota usage for LLMs.

Low Thinking mode is designed to limit the model’s search space, meaning you get very low latency and much faster performance. This makes it perfect for taking care of most of those routine coding tasks without exceeding the quota set during this free preview period.

I try to avoid using High until Low screws up the job at least three times, which happens, and this way I can use High for a much more precise query that will bypass all of Low’s problems. It may be a while before High gives you any more tokens to use, so you should keep these things in mind.

Favor stand-alone tasks rather than interactive ones

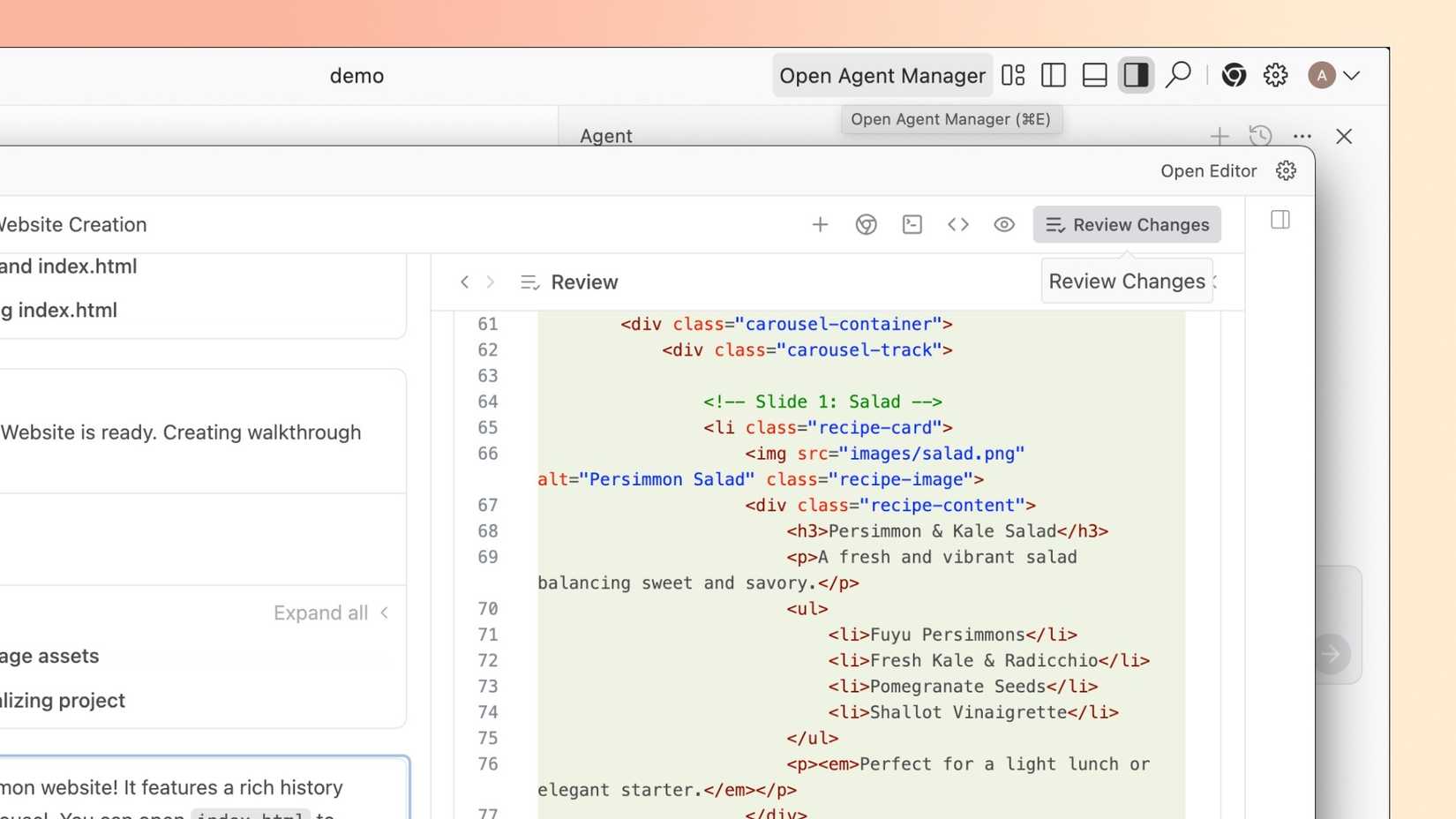

If you want to protect your usage quota in Google Antigravity, the key is to prioritize self-contained workflows over intense, real-time synchronous interaction. The platform divides operations into two distinct models: the synchronous editor view and the asynchronous manager view.

The Editor view is what handles this back and forth online and gives you real-time support. As it is very interactive, this mode naturally consumes tokens quite quickly. If you’re trying to save your quota, your best bet is to use Agent Manager, which they call Mission Control, and delegate these important tasks in multiple steps to agents. This allows agents to operate autonomously in the background.

For best retention, you should be aware that large, long-running agent tasks delegated in the Manager view should use AIs other than the Gemini 3 (Low) model. This prevents your limited quota from running out quickly. This also ensures that you get higher overall throughput. This approach frees you up so you can act more like an architect, orchestrating parallel work instead of having to focus on coding line by line.

You should also use artifacts, which are the verifiable deliverables that the agent generates as it goes through the process. Artifacts are essentially structured outputs. This includes things like task lists, implementation plans, screenshots, and even browser recordings.

They are essential for building essential user trust because they document both what the agent plans to do and how it verifies the code it executes. For example, an agent uses Artifacts to verify their own work. To do this, it can take screenshots or videos of the application running directly in the browser. Artifacts also make a continuous feedback loop possible.

Developers can drop Google Docs-style comments directly on an artifact, and the agent automatically takes that feedback and incorporates it into the execution of its current tasks. This is great because it means you don’t need to force a full restart, which means you waste fewer tokens.

The best way to use Google Antigravity without running out of tokens comes down to realizing that the specific LLMs it supports are not all the same. In other words, you need to match the model type to what the task actually requires. Antigravity handles this by giving you access to many AIs directly in its system, like Gemini 3 Pro, Claude Sonnet 4.5, and OpenAI’s GPT-OSS.

Claude Sonnet 4.5 demonstrates real power in detailed reasoning and documentation creation. On the other hand, GPT-OSS is valuable if you are doing rapid prototyping tasks. You need to use them where they excel, otherwise you will run out of tokens by using them in areas where they are not as good as other agents you can use. So don’t try to use the best AI every time.

When managing usage quotas, you should actively move tasks that do not absolutely need Antigravity’s agent capabilities out of the platform. Things that aren’t coding, like complex commands, data management, or even simple debugging, should probably be done in your local development environment or using external tools like a standalone terminal. This prevents the IDE from using too many resources and prevents you from hitting those hard throughput limits too soon.

Antigravity currently has so many restrictions that I wouldn’t say it can be used alone at any time. Even with my AI Pro account, I seem to hit that quota more often than I’d like.

By using these disciplined approaches, you ensure you get the maximum power, speed, and parallel capacity from Google Antigravity. Since this is an early release, we can assume that there will be updates in the future, but we haven’t seen any indication yet.

These rate limits may ease over time, but it will likely be easier for those who pay. So if this overview has convinced you that Google’s VS Code clone is what you want, then you should think about signing up for a paid AI plan.