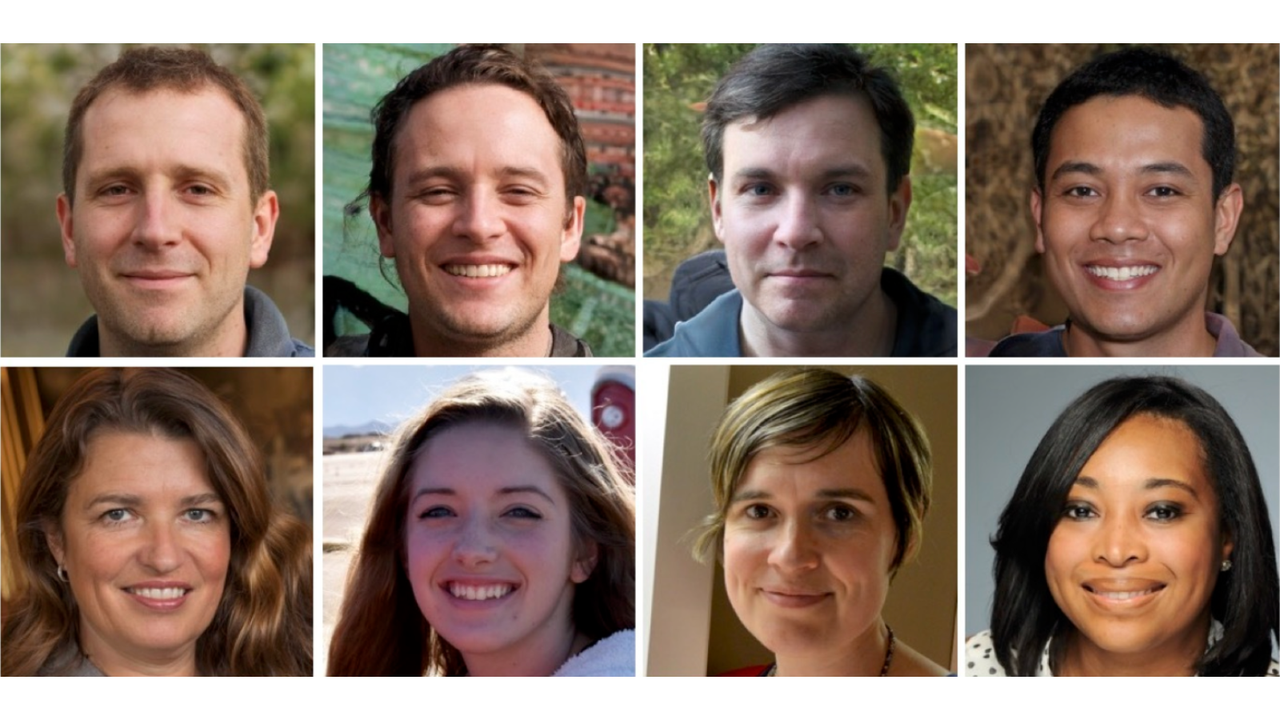

AI is getting better and better at generating faces — but you can train to spot the fakes

Face images generated by artificial intelligence (AI) are so realistic that even “super-IDs” – an elite group with exceptionally strong facial processing abilities – are no better than luck in detecting fake faces.

People with typical recognition abilities are worse than chance: more often than not, they think AI-generated faces are real.

“I think it was encouraging to find that our fairly short training procedure significantly increased performance in both groups,” said the study’s lead author. Katie Grayassociate professor of psychology at the University of Reading in the United Kingdom, told Live Science.

Surprisingly, the training increased accuracy similarly in super-recognizers and typical recognizers, Gray said. Since super-IDs are better at spotting fake faces initially, this suggests that they rely on another set of cues, not just rendering errors, to identify fake faces.

Gray hopes that scientists will be able to exploit the improved detection capabilities of super-recognizers to better spot AI-generated images in the future.

“To best detect synthetic faces, it might be possible to use AI detection algorithms with a human-in-the-loop approach, where that human is a trained SR. [super recognizer]”, the authors wrote in the study.

Deepfake detection

In recent years, there has been a wave of AI-generated images online. Deepfake faces are created using a two-step AI algorithm called generative adversarial networks. First, a fake image is generated from real-world images, then the resulting image is examined by a discriminator which determines whether it is real or fake. With iteration, the fake images become realistic enough to outperform the discriminator.

These algorithms have now improved to the point that individuals are often fooled into thinking that fake faces are more “real” than real faces – a phenomenon known as “hyperrealism“.

As a result, researchers are now trying to design training regiments that can improve individuals’ abilities to detect AI faces. These training courses highlight common rendering errors on AI-generated faces, such as face having a middle tooth, odd hairline, or unnatural-looking skin texture. They also point out that fake faces tend to be more proportional than the real ones.

In theory, so-called super identifiers should be better at detecting counterfeits than the average person. These super recognizers are individuals who excel at facial perception and recognition tasks, in which they can be shown two photographs of unfamiliar individuals and asked to identify whether they are the same person or not. But to date, few studies have examined the ability of super-IDs to detect fake faces and whether training can improve their performance.

To fill this gap, Gray and his team conducted a series of online experiments comparing the performance of a group of super-recognizers to that of classical recognizers. The super recognizers were recruited from among the Greenwich Facial and Voice Recognition Lab volunteer database; they ranked among the top 2% of individuals in tasks where they were shown unfamiliar faces and had to remember them.

In the first experiment, an image of a face appeared on the screen and was either real or computer-generated. Participants had 10 seconds to decide whether the face was real or not. The super-identifiers performed no better than if they had guessed randomly, spotting only 41% of the AI’s faces. Typical recognition tools only correctly identified about 30% of counterfeits.

Each cohort also differed in how often they thought real faces were fake. This occurred in 39% of cases for super recognizers and approximately 46% for typical recognizers.

The next experiment was identical, but included a new set of participants who received a five-minute training session in which they were shown examples of errors in AI-generated faces. They were then tested on 10 faces and received real-time feedback on their accuracy in detecting fakes. The final step of the training involved a summary of rendering errors to watch out for. Participants then repeated the original task from the first experiment.

Training significantly improved detection accuracy, with super recognizers detecting 64% of fake faces and typical recognizers noticing 51%. The rate at which each group mislabeled real faces as fake was about the same as in the first experiment, with super-identifiers and typical recognizers rating real faces as “not real” 37% and 49% of the time, respectively.

Trained participants tended to take longer to scan the images than untrained participants: typical recognizers slowed by about 1.9 seconds and super recognizers by 1.2 seconds. Gray said this is a key message for anyone trying to determine whether a face they see is real or fake: Slow down and really inspect the features.

It should be noted, however, that the test was conducted immediately after the participants completed the training. It is therefore not known exactly how long the effect lasts.

“The training cannot be considered a sustainable and effective intervention, as it has not been retested,” Mike Ramonprofessor of applied data science and expert in face processing at the University of Applied Sciences Berne in Switzerland, wrote in a review of the study conducted before its publication.

And because separate participants were used in the two experiments, we can’t be sure how much training improves an individual’s detection abilities, Ramon added. This would require testing the same group of people twice, before and after training.