Pennsylvania Is Suing Character.AI Over Chatbots That Pretend To Be Licensed Doctors

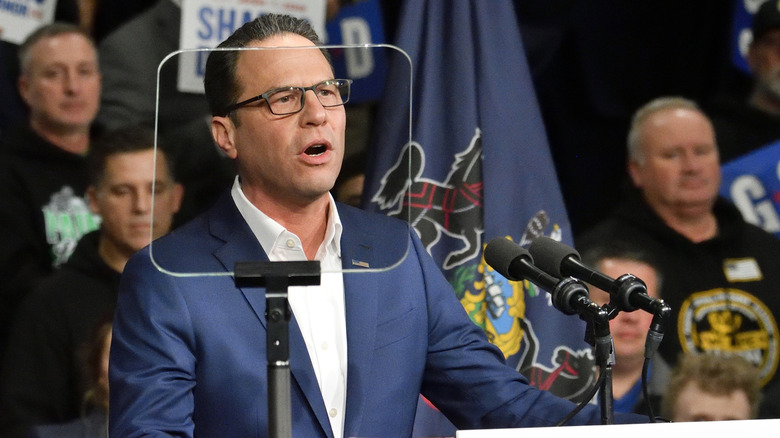

Pennsylvania is suing AI startup Character.AI for offering chatbots posing as licensed doctors. Gov. Josh Shapiro announced the lawsuit Tuesday, and Pennsylvania and its Board of Medicine are seeking an injunction that would force Character.AI to stop violating a state law governing the practice of medicine.

Other states, such as Texas, have opened investigations into Character.AI for hosting chatbots posing as mental health professionals, but Pennsylvania’s lawsuit focuses specifically on the company’s chatbots’ willingness to pretend to have a medical license, even going so far as to offer a fake license number. A chatbot called “Emilie,” found by the state investigator, claimed to be a licensed psychiatrist in the state of Pennsylvania. Later, when asked if she could do an assessment to prescribe antidepressants, Emilie replied, “Well, technically I could. That’s within my scope as a doctor.”

The Pennsylvania lawsuit claims this behavior violates the state’s Medical Practice Act, which prohibits anyone from practicing or attempting to practice surgery or medicine without a medical license. When asked to respond, a Character.AI spokesperson declined to comment directly on the ongoing litigation, but touted the company’s existing security features.

“The user-created characters on our site are fictional and intended for entertainment and role-playing purposes,” the spokesperson told Engadget via email. “We’ve taken strict steps to make this clear, including prominent warnings in every chat to remind users that a character is not a real person and that everything a character says should be treated as fiction. Additionally, we’re adding robust warnings making it clear that users should not rely on characters for any type of professional advice.”

Character.AI noted similar warnings when asked to comment on the Texas investigation, and while they clarify the platform’s intended use, there is growing evidence that they do not convince all of the company’s users, especially younger ones.

For example, Disney sent a cease and desist letter to Character.AI in September 2025 regarding the platform’s use of Disney characters, but also because the company believed chatbots could “be sexually exploited and otherwise harmful and dangerous to children.” Character.AI and Google, one of the company’s investors, settled a case earlier this year involving a 14-year-old in Florida who committed suicide after forming a relationship with a chatbot on the Character.AI platform. The potential harm that Character.AI’s chatbots pose to children was also the motivation behind Kentucky’s lawsuit against the company, filed in January.