AI Decodes Visual Brain Activity—and Writes Captions for It

November 6, 2025

3 min reading

AI decodes the brain’s visual activity and writes captions for it

A non-invasive imaging technique can translate scenes in your head into sentences. This could help reveal how the brain interprets the world

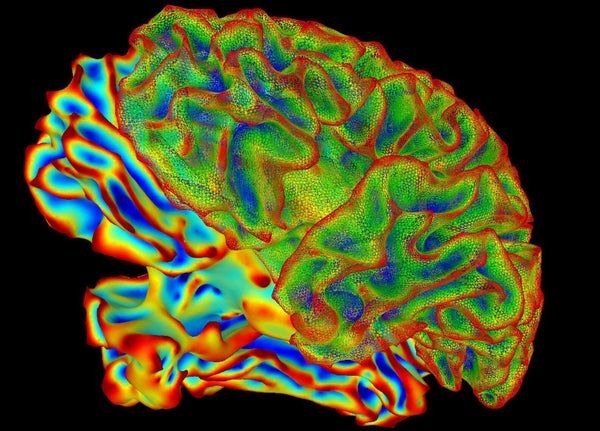

Functional magnetic resonance imaging is a non-invasive way to explore brain activity.

PBH Images/Alamy Stock Photo

Reading a person’s mind using a recording of their brain activity sounds futuristic, but it’s now a step closer to reality. A new technique called “mental captioning” generates descriptive sentences about what a person sees or imagines in their mind using a readout of their brain activity, with impressive precision.

The technique, described in an article published today in Scientific advancesalso offers clues to how the brain represents the world before thoughts are put into words. And it could help people with language difficulties, such as those caused by a stroke, communicate better.

The model predicts what a person is looking at “in great detail,” says Alex Huth, a computational neuroscientist at the University of California, Berkeley. “It’s hard to do. It’s surprising you can get this much detail.”

On supporting science journalism

If you enjoy this article, please consider supporting our award-winning journalism by subscription. By purchasing a subscription, you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

Scan and predict

Researchers have been able to accurately predict what a person sees or hears based on their brain activity for over a decade. But decoding the brain’s interpretation of complex content, such as short videos or abstract shapes, has proven more difficult.

Previous attempts have only identified keywords describing what a person saw rather than the full context, which could include the subject of a video and the actions taking place in it, says Tomoyasu Horikawa, a computational neuroscientist at the NTT Communication Sciences Laboratories in Kanagawa, Japan. Other attempts have used artificial intelligence (AI) models that can create a sentence structure on their own, making it difficult to know whether the description was actually represented in the brain, he adds.

Horikawa’s method first used a deep language AI model to analyze the captions of more than 2,000 videos, transforming each into a unique digital “meaning signature.” A separate AI tool was then trained on the brain scans of six participants and learned to find the patterns of brain activity corresponding to each meaning signature as the participants watched the videos.

Once trained, this brain decoder could read a new brain scan of a person watching a video and predict the meaningful signature. Then, another AI text generator would search for the phrase closest to the meaning signature decoded in the individual’s brain.

For example, one participant watched a short video of a person jumping off a waterfall. Using their brain activity, the AI model guessed strings of words, starting with “spring flow”, progressing to “over a rapid waterfall” at the tenth guess and arriving at “a person jumps over a deep waterfall on a mountain ridge” at the 100th guess.

The researchers also asked participants to remember the video clips they had seen. AI models successfully generated descriptions of these memories, demonstrating that the brain appears to use a similar representation for both visualization and memorization.

Read the future

This technique, which uses non-invasive functional magnetic resonance imaging, could help improve the process by which implanted brain-computer interfaces could translate people’s nonverbal mental representations directly into text. “If we can do this using these artificial systems, we may be able to help these people with communication difficulties,” says Huth, who developed a similar model in 2023 with his colleagues that decodes language from non-invasive brain recordings.

These findings raise concerns about mental privacy, Huth says, as researchers move closer to revealing intimate thoughts, emotions and health conditions that could, in theory, be used for surveillance, manipulation or discrimination against people. Neither Huth’s nor Horikawa’s model crosses a line, they both say, because these techniques require participants’ consent and the models cannot discern private thoughts. “No one has shown yet that you can do this,” Huth says.

This article is reproduced with permission and has been published for the first time November 5, 2025.

It’s time to defend science

If you enjoyed this article, I would like to ask for your support. Scientific American has been defending science and industry for 180 years, and we are currently experiencing perhaps the most critical moment in these two centuries of history.

I was a Scientific American subscriber since the age of 12, and it helped shape the way I see the world. SciAm always educates and delights me, and inspires a sense of respect for our vast and beautiful universe. I hope this is the case for you too.

If you subscribe to Scientific Americanyou help ensure our coverage centers on meaningful research and discoveries; that we have the resources to account for decisions that threaten laboratories across the United States; and that we support budding and working scientists at a time when the value of science itself too often goes unrecognized.

In exchange, you receive essential information, captivating podcasts, brilliant infographics, newsletters not to be missed, unmissable videos, stimulating games and the best writings and reports from the scientific world. You can even offer a subscription to someone.

There has never been a more important time for us to stand up and show why science matters. I hope you will support us in this mission.