How to Find Relationships in Your Data

Data collection is not enough. You can fill in calculation sheets with data, but it’s useless if you can’t act on it. Regression is one of the most powerful statistical tools to find relationships in data. Python facilitates the task and it is much more flexible than a spreadsheet. Put this pencil and cuff and pick up Python instead. Here’s how to start.

Simple linear regression: find trends

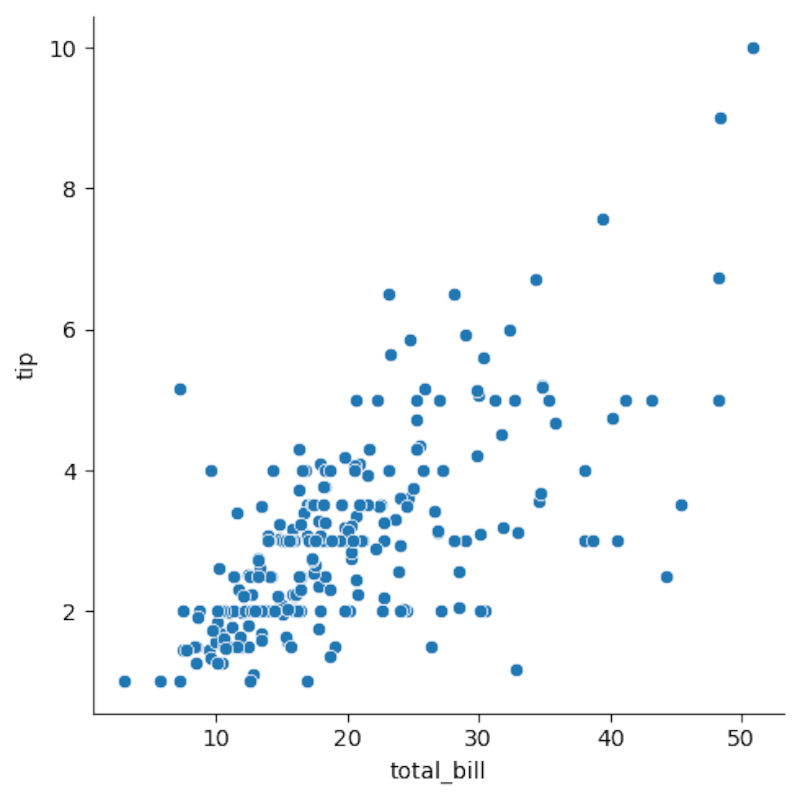

The simplest form of regression in Python is, well, a simple linear regression. With a simple linear regression, you try to see if there is a relationship between two variables, with the first known as “independent variable” and the latter this “dependent variable”. The independent variable is generally drawn on the x axis and the variable dependent on the Y axis. This creates the classic dispersion diagram of data points. The objective is to draw a line which best corresponds to this dispersion diagram.

We will start by using the example of data advice in New York restaurants. We want to see if there is a relationship between the total bill and the tip. This data set is included in the SEABorn statistical tracing package, my favorite data visualization tool. I defined these tools in a Mamba environment for easy access.

First, we will import Seaborn, a statistical tracing database.

import seaborn as sns

Then we will take care of the data set:

tips = sns.load_dataset('tips')

If you use a jupyter notebook, like the one I bind on my own github page, be sure to include this line to display images in the notebook instead of an external window:

%matplotlib inline

We will now look at the dispersion diagram with the Relplot method:

sns.relplot(x='total_bill',y='tip',data=tips)

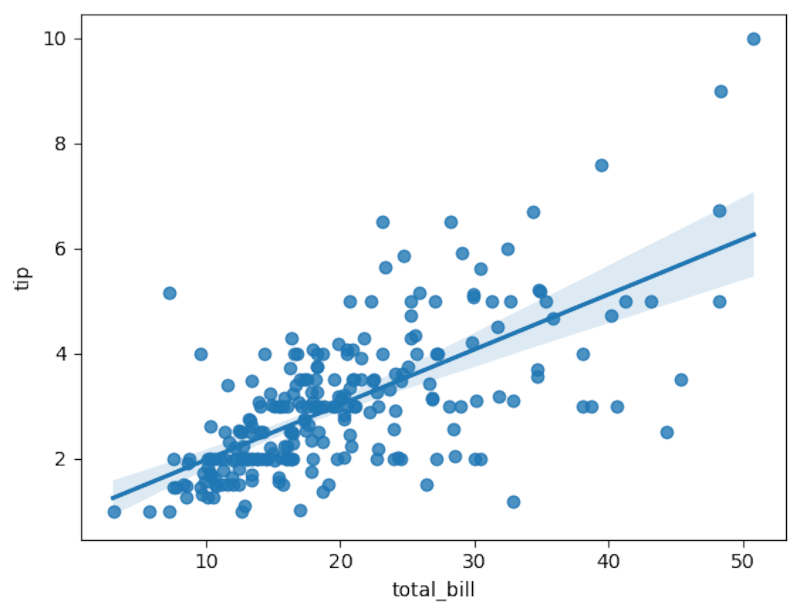

The dispersion diagram seems to be mainly linear. This means that there could be a positive linear relationship between the amount of the invoice and the tip. We can draw the regression line with the Regplot method:

sns.regplot(x='total_bill',y='tip',data=tips)

The line seems to adapt quite well.

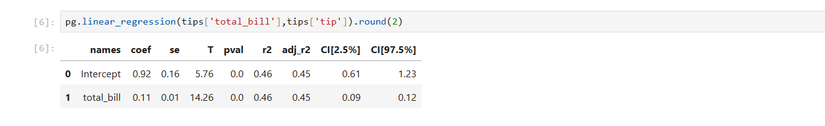

We can use another, penguin library, for a more formal analysis. The linear penguin method will calculate the coefficients of the line regression equation to adapt to data points and determine the adjustment.

import pingouin as pg

pg.linear_regression(tips['total_bill'],tips['tip']).round(2)

The rounding will make the results easier to read. The number to pay attention to linear regression is the correlation coefficient. In the resulting table, it is listed as “R2” because it is the square of the correlation coefficient. This is .46, which indicates a good adjustment. Taking the square root reveals that it is around 0.68, which is quite close to 1, indicating a positive linear relationship more formally than the intrigue that we saw earlier.

We can also build a model with the values of the table. You may remember the equation of a line: y = mx + b. The Y would be the dependent variable, the M is the coefficient of X, or the total invoice, which is 0.11. This determines how stiff the line is. The B is the order y, or 0.92.

The resulting equation based on this model is

tip = 0.11(total bill) + 0.92

The regression equations return this, so it would be:

tip = 0.92 + 0.11(total_bill)

We can write a short Python function which predicts the tip according to the amount of the invoice.

def tip(total_bill):

return 0.92 + 0.11 * total_bill

The second line is supposed to be set back, but our CMS cannot display this, so please make sure you put this line on your machine. Remember that python withdrawals are four spaces.

Predict the advice of a restaurant invoice of $ 100:

tip(100)

The expected point is around $ 12.

Multiple linear regression: take regression in the third dimension, and beyond

Linear regression can be extended to more variables than two. You can look at more independent variables. Instead of adjusting a line on data points on a plane, you install a plane on a dispersion diagram. Unfortunately, this is more difficult to visualize than with a 2D regression. I used multiple regression to build a laptop price model according to their specifications.

We will use the TIPS data set. This time, we will examine the party size with the “Size” column. It’s easy to do in penguin.

pg.linear_regression(tips[['total_bill','size']],tips['tip']).round(2)

Note the double supports on the first argument specifying the total invoice and the size of the game. Note that the R² is identical. This also means that there is a good adjustment and that the total bill and the size of the table are good tip predictors.

We can rewrite our previous model to take into account the size, using the coefficient of the size of the table:

def tip(total_bill,size):

return 0.67 + 0.09 * total_bill + 0.19 * size

Non -linear regression: adjustment curves

Not only can you adapt a linear regression, but you can also adapt to non -linear curves. I will demonstrate it using NUMPY to generate certain data points that can represent a quadratic route.

First of all, I will generate a large table of data points in NUMPY for the X axis:

x = np.linspace(-100,100,1000)

Now I’m going to create a quadratic route for the Y axis.

y = 4*x**2 + 2*x + 3

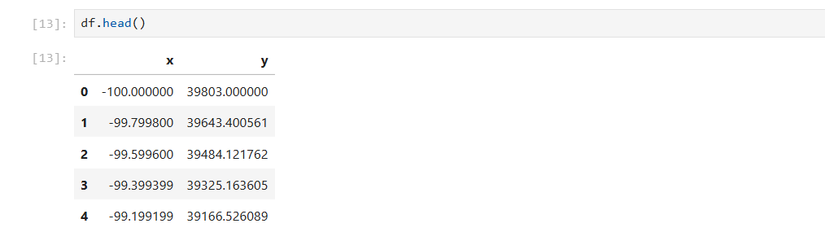

To create regression, I will create a dataframe pandas, a data structure similar to a relational database, for axes X and Y. This will create columns for X and Y values, with the names “X” and “Y”. We pass a dictionary for the dataframe that we want to create in Pandas. We will call DataFrame “DF”.

import pandas as pd

df = pd.DataFrame({'x':x,'y':y})

We can examine our dataframe with the head method:

df.head()

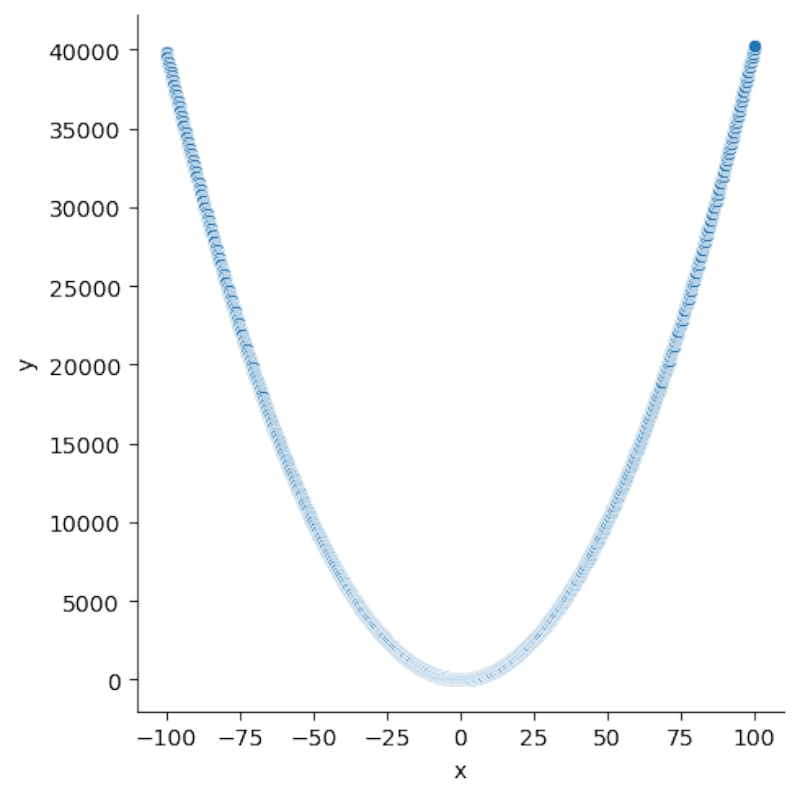

We can create a dispersion diagram with Seaborn as we did earlier with linear data:

sns.relplot(x='x',y='y',data=df)

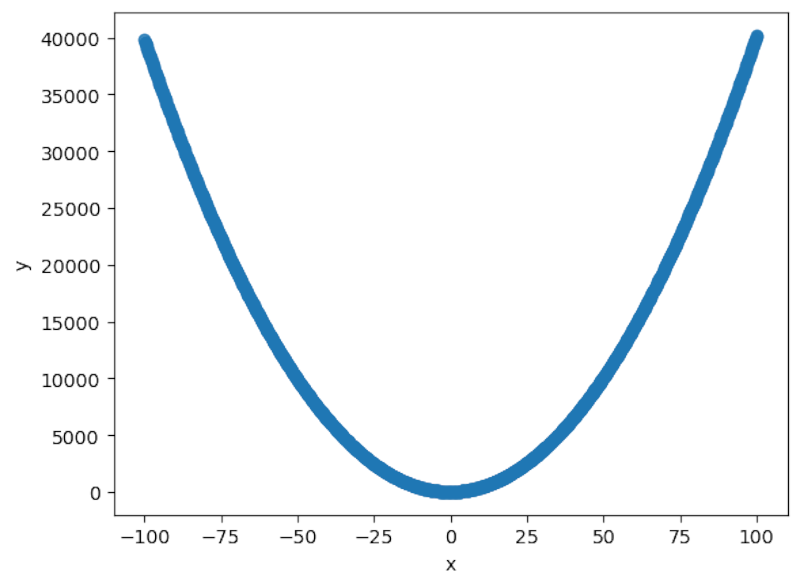

It resembles the classic parabolic intrigue that you may remember of the mathematics class, drawing on your graphic calculator (which Python can replace). Let’s see if we can install a parable on it. The Seaborn Regplot method has the control option, which specifies the degree of the polynomial line to adapt. Since we try to draw a quadratic line, we will define the order on 2:

sns.regplot(x='x',y='y',order=2,data=df)

It seems to adapt to a classic quadratic parable.

To obtain the regression of the format, we can use the non -linear regression technique with penguin. We will simply add another column to our dataframe for square the X values:

df['x2'] = df['x']**2

We can then use a linear regression to adapt to the quadratic curve:

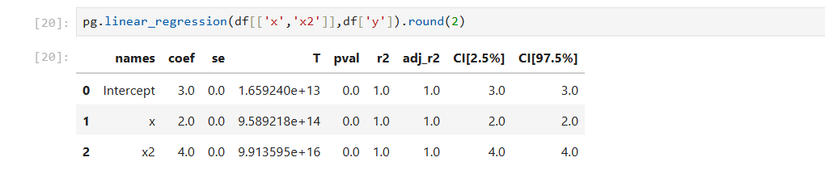

pg.linear_regression(df[['x','x2']],df['y']).round(2)

Because it was an artificial creation, the R² is 1, indicating a very good adjustment, which you would probably not see on the real data.

We can also build a predictive model using a function:

def quad(x):

return 3 + 2*x + 4*x**2

You can also extend this method to polynomials with degrees greater than 2.

Logistics regression: adjustment of binary categories

If you want to find a relationship for binary categories, such as a certain risk factor, as if a person smokes or not, we can use logistics regression.

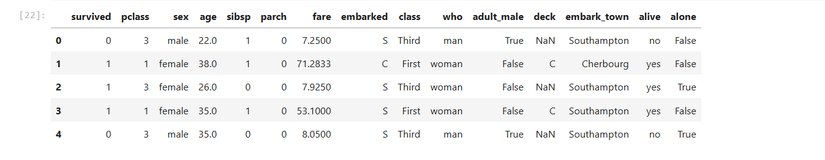

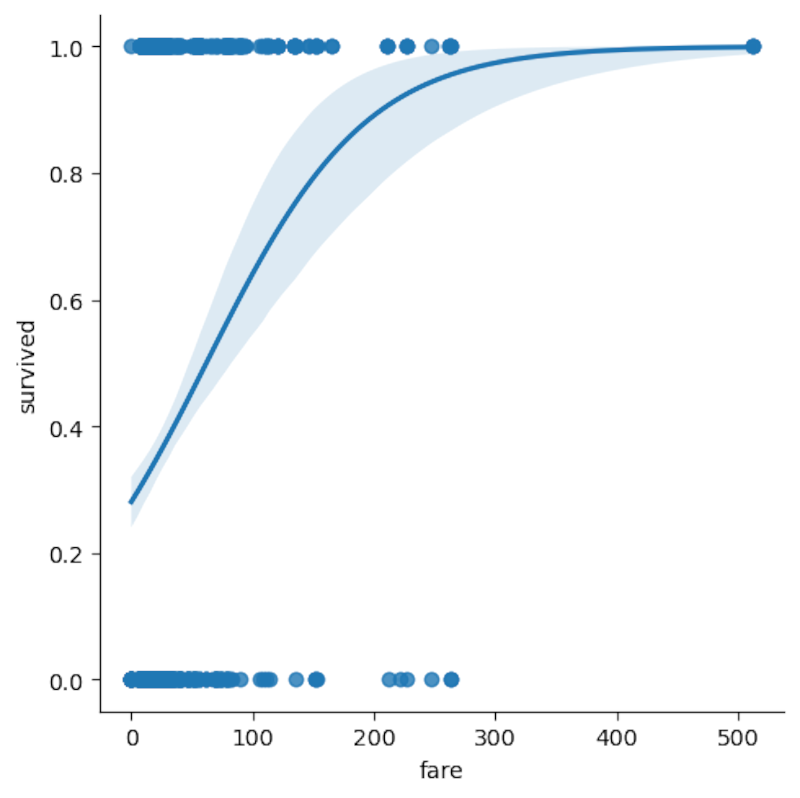

The easiest way to visualize this is again to use the Seaborn library. We will load the passenger data set on the Titanic. We want to see if the price of the ticket was a predictor of who would survive or not the unhappy trip.

titanic = sns.load_dataset('titanic')

We can examine the data as we did with the quadratic dataframe:

titanic.head()

We will use the LMPLOT method because it can adapt to a logistics curve:

sns.lmplot(x='fare',y='survived',logistic=True,data=titanic)

We see the logistics curve on the number of passengers, separated by their survival or not. The “Survive” column is already separated in 0 for “did not survive” and 1 for “survived”.

We can use penguin to officially determine whether the price of prices was a predictor of survival on the titanic, using penguin, who, among his many statistical tests, offers a logistical regression:

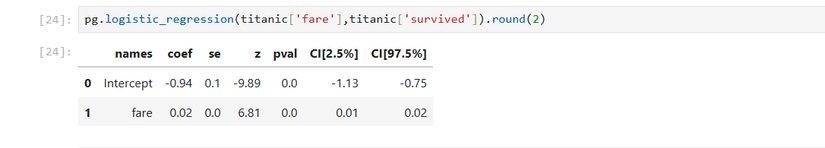

pg.logistic_regression(titanic['fare'],titanic['survived']).round(2)

The number to pay attention to a statistical test is the P value, indicated in the table as “Pval”. It is 0.0, which indicates that the price of the ticket was a strong predictor to survive the trip of the Titanic.

These operations show why Python is a language of choice for data analysis. He does operations that could take days to do by hand, or that were even beyond what statisticians were ready to do, only take a few seconds in Python. These regression operations will help you browse your data to find relationships and make predictions.