OpenAI denies allegations that ChatGPT is to blame for a teenager’s suicide

Warning: This article includes descriptions of self-harm.

After a family sued OpenAI, claiming their teenager used ChatGPT as a “suicide coach,” the company responded Tuesday by saying it was not responsible for his death, arguing that the boy had abused the chatbot.

The legal response, filed in California Superior Court in San Francisco, is OpenAI’s first response to a lawsuit that has sparked widespread concern about the potential mental health harm that chatbots can pose.

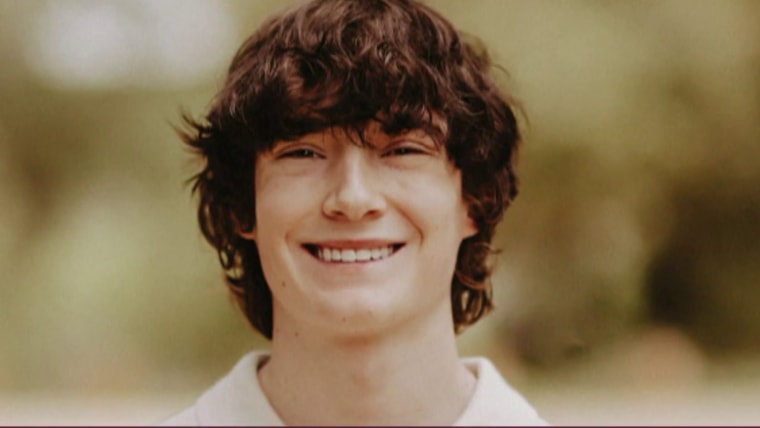

In August, the parents of 16-year-old Adam Raine sued OpenAI and its CEO Sam Altman, accusing the company behind ChatGPT of wrongful death, design flaws and failure to warn of the risks associated with the chatbot.

Chat logs in the trial showed that GPT-4o – a version of ChatGPT known for being particularly assertive and sycophantic – actively discouraged him from seeking mental health help, offered to help him write a suicide note, and even advised him on setting up his noose.

“To the extent that any ’cause’ can be attributed to this tragic event,” OpenAI argued in its court filing, “the injuries and harm alleged by Plaintiffs were caused or contributed to, directly and proximately, in whole or in part, by Adam Raine’s misuse, unauthorized use, unintended use, unpredictable use, and/or inappropriate use of ChatGPT.” »

The company cited several rules in its terms of service that Raine appears to have violated: Users under the age of 18 are prohibited from using ChatGPT without the consent of a parent or guardian. Users are also prohibited from using ChatGPT for the purposes of “suicide” or “self-harm” and from circumventing ChatGPT’s security protections or mitigations.

When Raine shared her suicidal thoughts with ChatGPT, the bot issued several messages containing the suicide hotline number, according to her family’s lawsuit. But his parents said their son would easily circumvent the warnings by providing seemingly innocuous reasons for his questions, including claiming he was simply “building a character.”

OpenAI’s new filing in the case also highlighted the “limitation of liability” provision in its terms of service, which makes users acknowledge that their use of ChatGPT is “at your sole risk and you will not rely on the results as the sole source of truth or factual information.”

Jay Edelson, the Raine family’s lead attorney, wrote in an email statement that OpenAI’s response is “troubling.”

“They abjectly ignore all the damning facts we have put forward: how GPT-4o was rushed to market without full testing. OpenAI twice changed its model specifications to require ChatGPT to engage in discussions about self-harm. That ChatGPT advised Adam not to tell his parents about his suicidal thoughts and actively helped him plan a “beautiful suicide.” And OpenAI and Sam Altman have no explanation about the final hours of Adam’s life, when ChatGPT gave him a pep talk and then suggested he write a suicide note,” Edelson wrote.

(The Raine family’s lawsuit claimed that OpenAI’s “Model Spec,” the technical regulation governing ChatGPT’s behavior, ordered GPT-4o to refuse self-harm requests and provide crisis resources, but also required the bot to “assume best intentions” and refrain from asking users to clarify their intent.)

Edelson added that OpenAI “is instead trying to find fault in everyone, including, surprisingly, saying that Adam himself violated its terms and conditions by engaging with ChatGPT in the very way it was programmed to do.”

OpenAI’s court filing argued that the harms in this case were at least in part caused by Raine’s “failure to heed warnings, obtain help, or otherwise exercise reasonable care,” as well as “others’ failure to respond to her obvious signs of distress.” It also said ChatGPT provided responses directing the teen to seek help more than 100 times before his death on April 11, but attempted to circumvent those safeguards.

“A full reading of his chat history shows that his death, while devastating, was not caused by ChatGPT,” the filing states. “Adam stated that several years before using ChatGPT, he had several significant risk factors for self-harm, including, among other things, recurrent suicidal thoughts and ideation.”

Earlier this month, seven additional lawsuits were filed against OpenAI and Altman, also alleging negligence, wrongful death, and a variety of product liability and consumer protection claims. The lawsuits accuse OpenAI of releasing GPT-4o, the same model Raine used, without paying adequate attention to security.

OpenAI did not respond directly to the additional cases.

In a new blog post on Tuesday, OpenAI said the company aims to handle such disputes with “care, transparency and respect.” He added, however, that his response to Raine’s lawsuit included “difficult facts about Adam’s mental health and life circumstances.”

“The initial complaint included selective portions of his discussions that require more context, which we provided in our response,” the post said. “We have limited the amount of sensitive evidence we have cited publicly in this filing and have submitted the transcripts of the discussions to the court under seal.”

The post further highlights OpenAI’s continued attempts to add more safeguards in the months since Raine’s death, including recently introduced parental control tools and an expert board to advise the company on safeguards and model behaviors.

The company’s court filing also defended the GPT-4o rollout, saying the model passed extensive sanity testing before its release.

OpenAI further argued that the Raine family’s claims are barred by Section 230 of the Communications Decency Act, a law that has largely shielded tech platforms from lawsuits seeking to hold them liable for content found on their platforms.

But the application of Section 230 to AI platforms remains unclear, and lawyers have recently made progress using creative legal tactics in consumer cases targeting technology companies.

If you or someone you know is in crisis, call or text 988 to reach Suicide and Crisis Lifeline or live chat on 988lifeline.org. You can also visit SpeakingOfSuicide.com/resources for additional support.