These brain implants speak your mind — even when you don’t want to : Shots

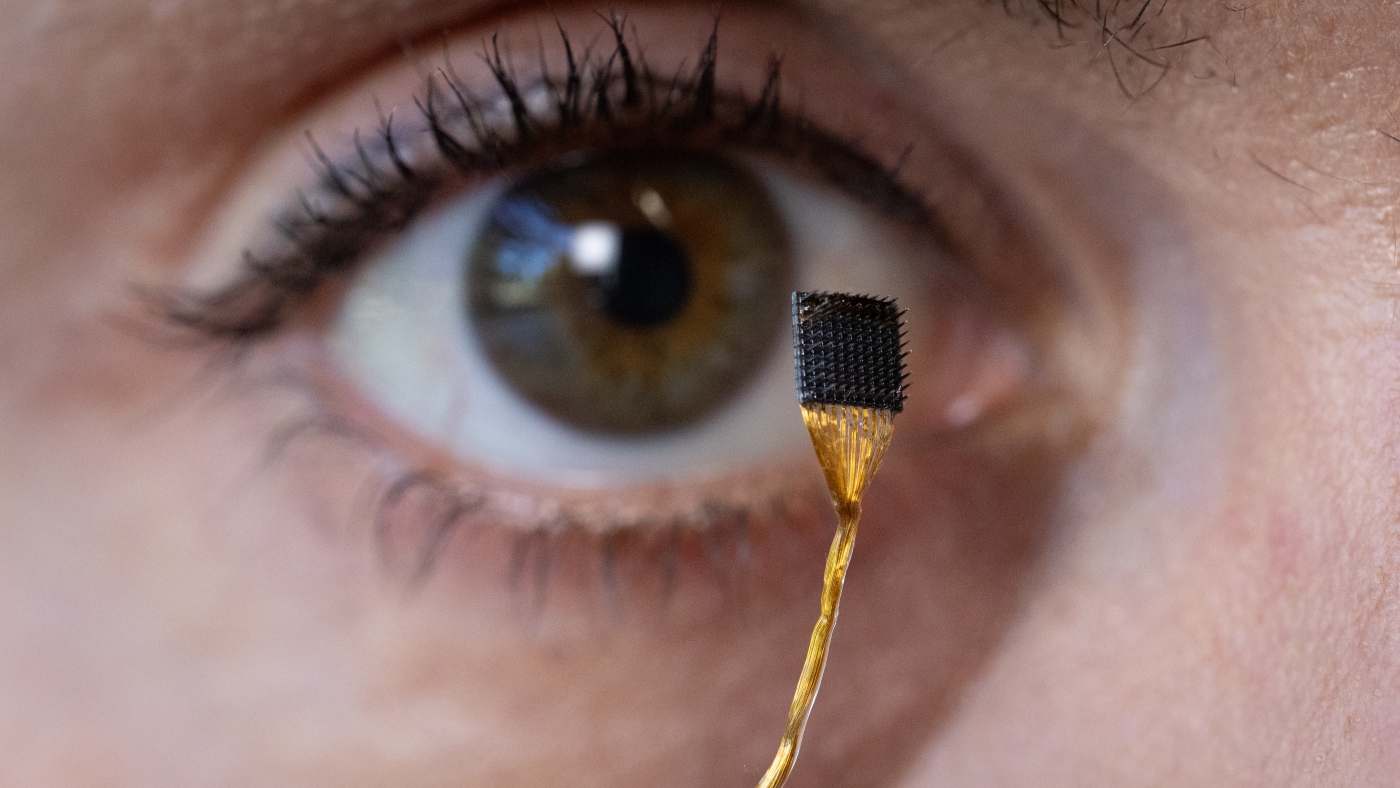

The postdoctoral researcher Erin Kunz holds a network of microelectrodes which can be placed on the surface of the brain as part of a brain interface.

Jim Gensheimer

hide

tilting legend

Jim Gensheimer

Surgically established devices that allow paralyzed people to speak can also listen to their inner monologue.

This is the conclusion of a study of brain interfaces (Bcis) in the newspaper Cell.

The observation could lead to BCIs that allow paralyzed users to produce a synthesized discourse more quickly and with less effort.

But the idea that the new technology can decode a person’s inner voice is “disturbing”, says Nita FarahanyProfessor of Law and Philosophy at Duke University and Author of the Book: Battle for your brain.

“The more we push this research forward, the more transparent our brain becomes,” said Farahany, adding that the measures to protect people’s privacy are lagging behind the technology that decodes signals in the brain.

From brain signal to speech

BCIs are able to decode the word using tiny electrodes of electrodes that monitor activity in the brain’s engine cortex, which controls the muscles involved in speech. Until now, these devices have relied on the product signals when a paralyzed person actively tries to speak a word or a sentence.

“We record the signals because they try to speak and reflect these neural signals in the words they are trying to say,” said Erin KunzPostdoctoral researcher at the Translation Laboratory for Neural Prostheses of the University of Stanford.

Based on product signals when a paralyzed person attempts the speech allows this person to mentally zip the lip and avoid walking. But that also means that they must make a concerted effort to transmit a word or a sentence, which can be tiring and long.

Kunz and a team of scientists therefore decided to find a better way – by studying the brain signals of four people who already used BCI to communicate.

The team wanted to know if he could decode brain signals which are much more subtle than those produced by attempt speech. The team wanted to decode imagined speech.

During the attempted speech, a paralyzed person does his best to physically produce words pronounced, even if they can no longer. In an imagined or interior discourse, the individual simply thinks of a word or a sentence – perhaps by imagining what it would look like.

The team found that imaginary speech produces signals in the engine cortex which are similar, but weaker than those of the attempted speech. And with the help of artificial intelligence, they were able to translate these weaker signals into words.

“We were able to obtain precision decoding sentences of 74% of a vocabulary of 125,000 words,” explains Kunz.

The decoding of a person’s inner speech has made communication faster and easier for participants. But Kunz says that success has raised an uncomfortable question: “If the inner speech is sufficiently similar to the attempt at speech, could it be involuntarily flee when someone uses a BCI?”

Their research suggested that this could, in certain circumstances, as when a person silently recalled a sequence of directions.

Password protection?

The team therefore tried two strategies to protect the confidentiality of BCI users.

First of all, they programmed the device to ignore the signals of the interior speech. This worked, but removed the speed and ease associated with the decoding of inner speech.

Kunz therefore says that the team borrowed an approach used by virtual assistants like Alexa and Siri, who only wake up when they hear a specific sentence.

“We have chosen Chitty Chitty Bang Bang, because it does not happen too frequently in conversations and it is very identifiable,” explains Kunz.

This allowed participants to control when their inner speech could be decoded.

But the guarantees have tried in the study “Suppose that we can control our thinking in a way that does not really correspond to the way our minds work,” says Farahany.

For example, says Farahany, the study participants could not prevent the BCI from decoding the figures they thought, even if they did not intend to share them.

This suggests that “the border between public and private thought can be more vague than we assume,” says Farahany.

Confidentiality problems are less a problem with BCI surgically, which are well understood by users and will be regulated by the Food and Drug Administration when they reach the market. But this kind of education and regulation may not extend to consumers’ next BCIS, which will probably be worn as ceilings and used for activities like playing video games.

The first consumption devices will not be sensitive enough to detect words as well as the established devices, says Farahany. But the new study suggests that the capacity could be added one day.

If this is the case, says Farahany, companies like Apple, Amazon, Google and Meta could know what is going on in the minds of a consumer, even if this person does not intend to share the information.

“We must recognize that this new era of brain transparency is really a whole new border for us,” said Farahany.

But it is encouraging, she says, that scientists are already thinking about ways to help people keep their private private thoughts.