Why NVMe drives won’t speed up your Plex NAS

Many NAS builders, especially those using old-fashioned mechanical drives, also tend to add an “SSD cache” to their units in an attempt to improve performance.

But is it really worth it?

What is an SSD cache?

Hard drives offer massive storage capacity at relatively low cost, but they are physically limited by spinning platters and movable read/write heads. This is a problem with PCs, but also with NAS. RAID helps, but it’s still not the same as an SSD. This mechanical nature makes them inherently slow to locate and retrieve small data scattered across the disk.

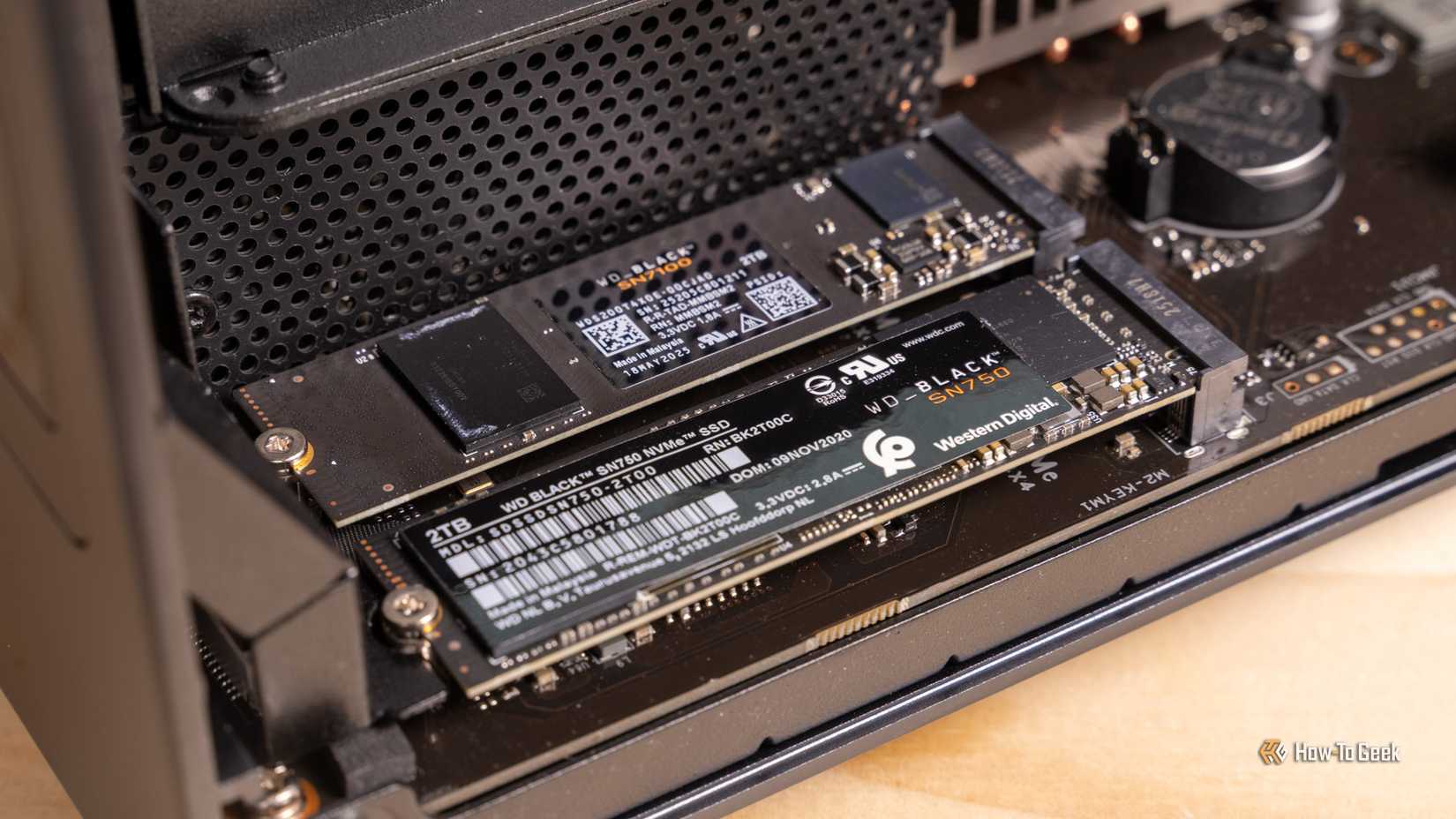

Enter the SSD cache. A solid state drive uses flash memory, which has no moving parts and can access data almost instantly. When you install an SSD cache in a NAS, you are essentially creating a high-speed buffer between these slower mechanical drives and your network. The caching system uses an algorithm to identify “hot” data: the files and applications you access most frequently. Instead of forcing the mechanical arms of your hard drives to retrieve this data every time you ask, the NAS stores a copy of it on the fast SSD. When you request this file again, the NAS serves it directly from flash memory, bypassing the mechanical bottleneck entirely.

Additionally, caches can operate in two main modes: read-only and read-write. A read-only cache simply holds copies of frequently accessed data for faster retrieval. A read-write cache goes one step further by acting as a temporary landing zone for incoming data. When you save a file to the NAS, it is immediately written to the super-fast SSD, allowing your computer to complete the transfer quickly. The NAS then silently offloads this data from the SSD to the permanent mechanical hard drives in the background.

Does this actually improve performance?

The short answer is yes, but the long answer depends heavily on the specific type of data traffic your NAS handles on a daily basis. To understand performance gains, we actually need to differentiate between sequential operations and random operations.

Sequential data involves large, continuous blocks of information, such as high-definition movies, large audio files, or entire system backup images. Mechanical hard drives, especially when grouped together in a RAID configuration, are surprisingly efficient at handling sequential data. In many cases, a standard RAID array can easily saturate a typical one-gigabit or even two-and-a-half gigabit network connection without breaking a sweat. If you’re adding an SSD cache just to move large video files, you probably won’t see any improvement because the bottleneck is your network cable, not the hard drives.

However, performance takes a dramatic leap when dealing with random I/O operations. Random data involves thousands of small, scattered files read and written simultaneously. This happens when you host virtual machines, run active databases, host a busy website, or manage large directories of tiny text files or code repositories.

Mechanical drives choke during random operations because the physical read/write head must repeatedly traverse the platter thousands of times per second, resulting in high latency and slow response times. Since an SSD has no moving parts, its latency is almost zero. An SSD cache can handle tens of thousands of random I/O operations per second, compared to only hundreds handled by a mechanical drive.

Therefore, if your daily workflow involves compiling software, running Docker containers, or serving dozens of active users simultaneously, caching will make the entire NAS interface much more responsive.

Should I add one?

As I probably hinted above, it depends on your specific use case. If your NAS functions primarily as a media server for applications like Plex or Emby, an archiving destination for your family photos, or a cold storage vault for automated weekly backups, an SSD cache is generally a waste of money. These workloads consist almost entirely of large, sequential file transfers that are already handled efficiently by mechanical drives. Fast flash memory will go largely unused, providing no tangible benefit to your viewing or saving experience.

Conversely, if you frequently read and write files from your NAS, the investment becomes entirely justifiable. If you are running multiple virtual machines directly from your NAS, an SSD cache will significantly reduce boot times and eliminate the slowness typically associated with virtualized desktop environments. Likewise, if your NAS serves as the central file repository for a busy office where multiple employees are constantly opening, editing and saving small documents simultaneously, the cache will prevent mechanical drives from being overwhelmed by simultaneous requests.

Before purchasing, you may also want to consider alternative hardware upgrades that could provide a better return on investment. Upgrading your NAS’s system RAM often provides a more immediate and cost-effective performance improvement, as the operating system will naturally use the excess RAM as a super-fast data buffer.

Additionally, if your goal is to move large sequential files faster, upgrading to a ten-gigabit network environment will provide better results than adding cache to a one-gigabit network. Ultimately, an SSD cache is a highly specialized tool that addresses the specific problem of high-latency random I/O, and it should only be implemented if your workload suffers from precisely this bottleneck.