AI is changing PC graphics. Microsoft wants DirectX ready

Summary created by Smart Answers AI

In summary:

- PCWorld reports that Microsoft is integrating AI into DirectX with new tools called DirectX Linear Algebra and DirectX Compute Graph Compiler to revolutionize game rendering.

- Major chipmakers AMD, Intel, and Nvidia are supporting these AI initiatives, potentially allowing integrated GPUs to compete with discrete graphics cards in gaming performance.

- These technologies enable dynamic shader creation, neural texture compression, and advanced scaling that could democratize high-end graphics features like path tracing across different hardware.

Games are increasingly being rendered using AI, which is why Microsoft is integrating AI into how future graphics chips will render games.

Microsoft introduced DirectX Linear Algebra as well as the DirectX Compute Graph compiler to its DirectX programming interface on Thursday, with previews of each technology expected later this year.

At the very least, Microsoft’s positioning statement regarding the two technologies helps explain where each will fit.

“[Machine learning] “This is no longer a niche optimization or post-processing trick,” Adele Parsons, a graphics manager at Microsoft, wrote in a blog post. “It’s increasingly integrated throughout the graphics pipeline, influencing how images are generated, how content is created, and how game developers realize their artistic vision. DirectX is evolving to support this future, a future in which ML will be a first-class citizen alongside traditional rendering workloads.

Most enthusiasts understand how artificial intelligence or machine learning is used by graphics chips. Upscaling instructs the GPU to render the scene using a lower, less complex resolution, then uses AI techniques to scale or increase the resolution to the desired quality. Image generation asks the GPU to render a particular image, then another; it then uses AI to interpolate what the player should see in the intermediate frames. While this may introduce a bit of latency (lag), it can push frame rates to much higher levels, significantly improving visual smoothness.

Mark Hachman / Foundry

The two techniques are combined to increase the actual output of the GPU, allowing budget or integrated GPUs, like Intel’s new Panther Lake, to compete with older, discrete GPUs in the way they play games.

Microsoft’s Max McMullen, head of software engineering at Microsoft, invited representatives from AMD, Intel and Nvidia to take the stage with him at the Game Developer Conference in San Francisco to show their support.

It’s just math

What DirectX Linear Algebra does is simply support the mathematics used by AI. Traditional GPUs used vector matrix operations to calculate 3D shapes and lighting. CPUs designed for AI, like a new generation of workstation GPUs, use matrix mathematics. But logics like Nvidia’s Tensor cores, which have become increasingly important over time, perform matrix-to-matrix calculations.

However, DirectX Linear Algebra isn’t so much about giving game developers control of the AI. What Microsoft discovered, according to its blog post, is that some features, like temporal scaling, depend on matrix math – and that this works very well when applied to shaders. Shaders are like rendering instructions for your GPU, and they’re usually downloaded before you start playing a game, which Microsoft hates.

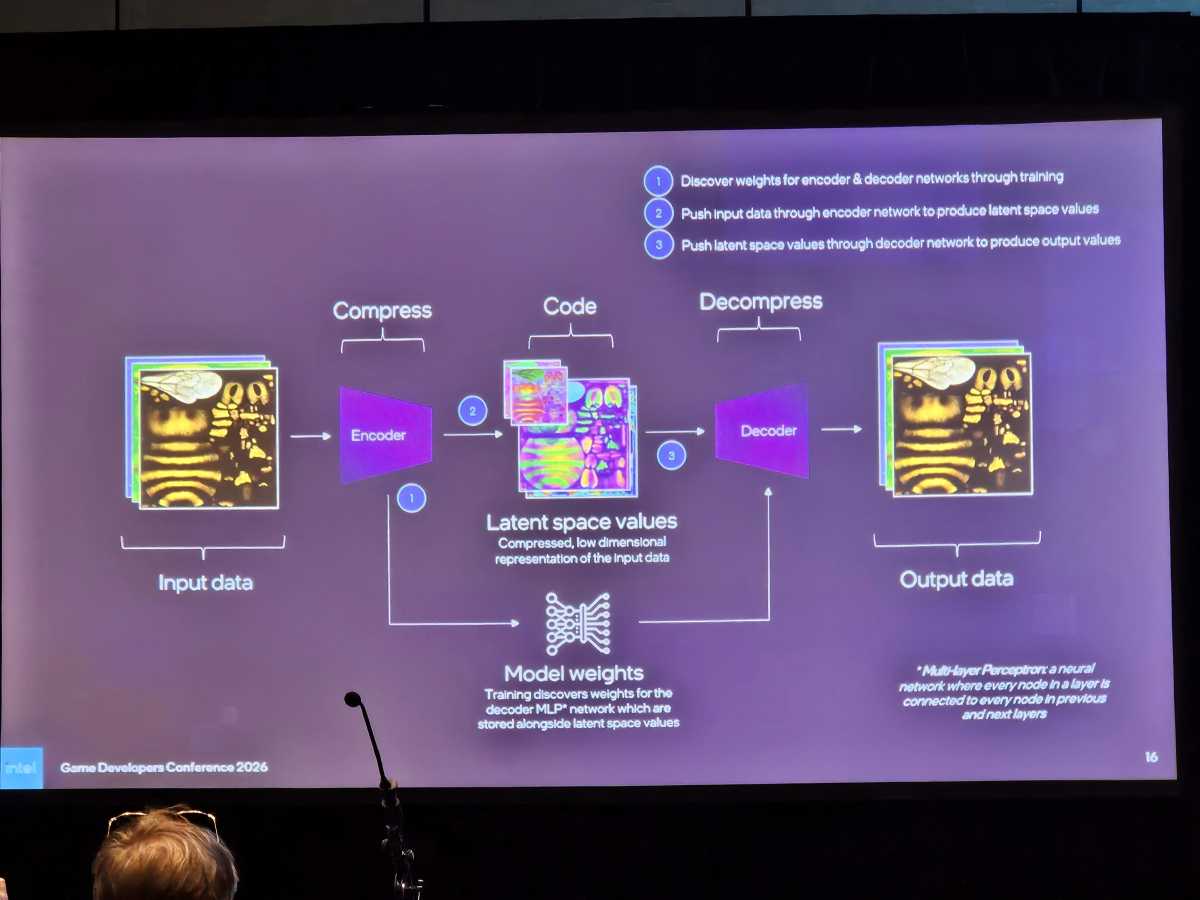

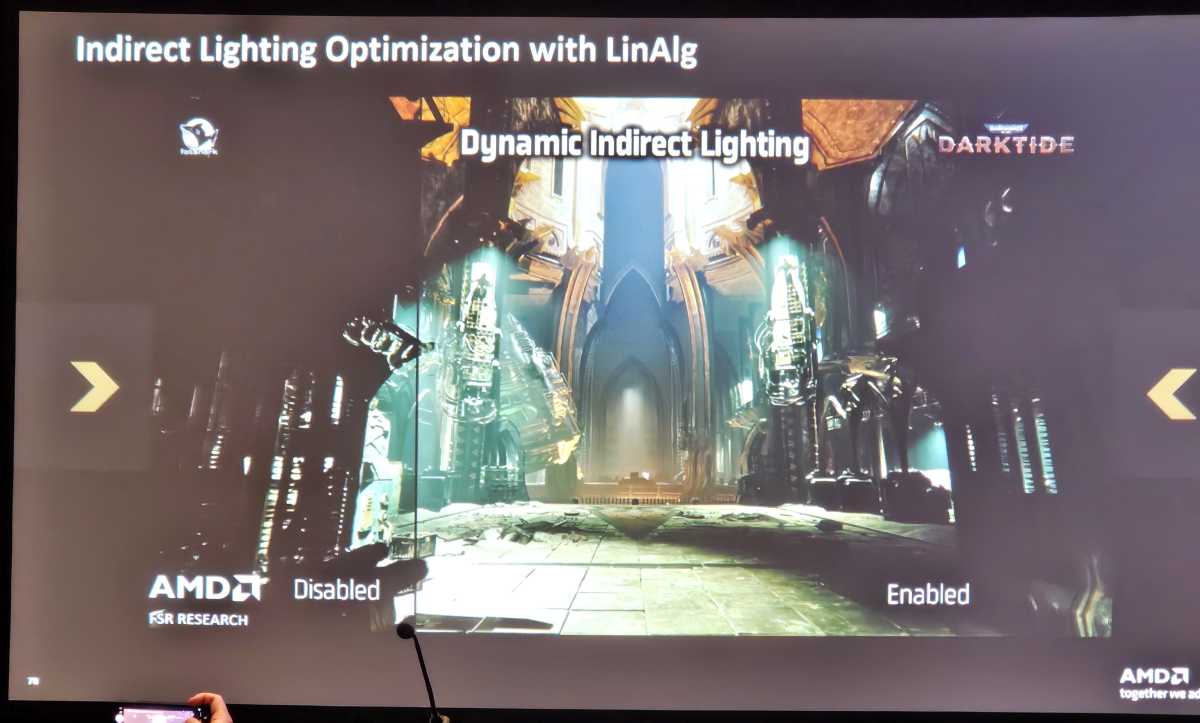

The DirectX Compute Graph compiler, however, could have a lot more potential. Older tools, like AMD’s first-generation FSR, draw scenes by examining changes at each pixel, describing changes from one frame to the next. But modern versions of FidelityFX Super Resolution (as well as Nvidia DLSS) have migrated to full model integration, where the entire scene or model is examined. Instead of asking pixels to “move,” the AI essentially calculates where the pixels are moving. should be and assigns them accordingly.

In other words, per-pixel interpolation might not know if a “ball” moved behind a “tree”. The idea is that interpolating the full model would provide a more accurate representation of the scene. What Microsoft is trying to do is migrate this into the DirectX pipeline itself.

Among other things, a game built around both DirectX technologies could essentially talk to the GPU and build its own shaders — and could do so in the future for GPUs that weren’t available when the game was published, noted Don Brittain, a distinguished engineer at Nvidia.

Some players, however, reject the notion of “fake frames,” in which the AI attempts to correctly guess what the GPU would otherwise render. These two DirectX technologies would push this concept further. Executives talked about “neural texture compression,” where the AI would essentially guess what a compressed texture should look like when it’s uncompressed; and “neural lighting,” where AI would calculate where it thought the light rays should go.

Mark Hachman / Foundry

The tradeoff is making more advanced features accessible to more players. Compressing neural textures could reduce the need for memory and storage consumed by game textures – by as much as 30%, McMullen said. Neural radiation could reduce the need for dedicated ray tracing units and make photorealistic “path tracing” more accessible to more players.

However, neither technology is close. The DirectX Compute Graph compiler will be available in private preview this summer, Microsoft announced. DirectX Linear Algebra will enter public preview in April. It would take some time after that for them to become part of DirectX proper and then be adopted by the industry as a whole.